As part of my PhD research, I am working on extending the depth-of-field of iris recognition cameras. In a series of blog posts (in the near future) I would like to share some of the things that I have learnt during the project. This post, the first one in the series, is an introduction to iris recognition biometric technology. I believe the material presented here could benefit someone new to iris recognition get a quick yet comprehensive overview of the field. In the following paragraphs, I have described the iris anatomy, and what makes it so special as a biometric technology, followed by the general basis of iris based verification, and the four major constituents of a general iris recognition system.

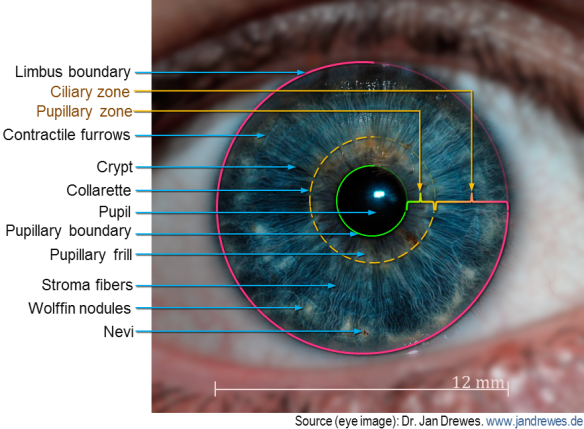

The human iris is the colored portion of the eye having a diameter which ranges between 10 mm and 13 mm [1,2]. The iris is perhaps the most complex tissue structure in the human body that is visible externally. The iris pattern has most of the desirable properties of an ideal biomarker, such as uniqueness, stability over time, and relatively easy accessibility. Being an internal organ, it is also protected from damage due to injuries and/or intentional fudging [3]. The presence or absence of specific features in the iris is largely determined by heredity (based on genetics); however the spatial distribution of the cells that form a particular iris pattern during embryonic development is highly chaotic. This pseudo-random morphogenesis, which is determined by epigenetic factors, results in unique patterns of the irises in all individuals including that of identical twins [2,4,5]. Even the iris patterns of the two eyes from the same individual are largely different. The diverse microstructures in the iris that manifest at multiple spatial scales [6] are shown in Figure 1. These textures, unique to each eye, provide distinctive biometric traits that are encoded by an iris recognition system into distinctive templates for the purpose of identity authentication. It is important to note that the color of the iris is not used as a biomarker since it is determined by genetics, which is not sufficiently discriminative.

Figure 1. Complexity and uniqueness of human iris. Fine textures on the iris forms unique biometric patterns which are encoded by iris recognition systems. (Original image processed to emphasize features).

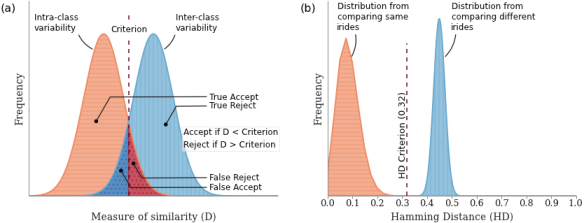

The problem of iris recognition is analogous to binary classification. It involves grouping the members of a set of objects into two classes based on a suitable measure of similarity (Figure 2 (a)). Similar objects cluster together as they exhibit less variability within the same class. In the figure below, the intra-class variability (randomness within class) and the inter-class variability (randomness between classes) are plotted as a function of an appropriate similarity measure, D. The degree of variability is proportional to the uncertainty of D. For example, in face recognition, the intra-class variability would be the uncertainty of identifying a person’s face imaged under varying lighting conditions, poses, time of acquisition, etc.; on the other hand, the inter-class variability is the variability between the faces of different subjects. Naturally, the degree of similarity is higher (corresponds to lower uncertainty) in the former case. As shown in the figure, there are four possible outcomes for the binary classification problem based on the two possible choices in the decision. The region of overlap produces the two types of error rates. The False Accept (or False Positive) is the area of overlap to the left of the decision criterion, and the False Reject (False Negative) is the area of overlap to the right of the decision criterion. Objects can be reliably classified only if the intra-class variability is less than the inter-class variability [2], in which case, the two distributions are sufficiently separated in space, and the degree of overlap is minimum. Figure 2 (b) (adapted from [5]) is a plot of the distributions of hamming distances (defined later) for 1208 pairwise comparisons of irises from the same eye, and 2064 pairwise comparisons of irises from different eyes. The figure shows that the two distributions are well separated indicating the robustness of using hamming distances for the iris recognition problem.

Figure 2. The iris recognition as a binary classification problem. (a) A schematic of the relationship between the intra-class variability, the inter-class variability and the four possible outcomes; (b) Distribution of the variabilities as a function of hamming distance for iris recognition. The figures have been adapted from [5].

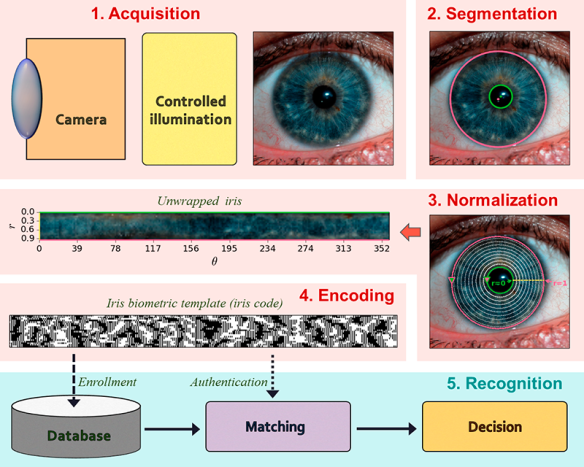

Generation of the iris code broadly consists of four basic steps. An additional matching step is required for the task of identification of a subject based on the iris code [7,8]. A schematic of this pipeline is shown in Figure 3.

Figure 3. Overview of iris biometric code generation. The different subsystems of iris recognition include the acquisition module, the segmentation module, the normalization module, the encoding module and the recognition module.

The steps are briefly described below:

| 1. | Iris acquisition |

The iris encoding/recognition starts with the acquisition of a high quality image of a subject’s eye. Almost all iris acquisition systems use near infrared (NIR) illumination in the 720-900 mm wavelengths for iris capture. NIR illumination provides greater visibility of the intricate structure of the iris, which is largely unaffected by pigmentation variations in the iris [2,7,9]. There has also been some evidence of the benefits of using visible illumination for iris acquisition, especially for large standoff and unconstrained environments [10]. Some research is also underway for multi-spectral iris acquisition. Two main challenges for iris acquisition systems are large standoff distances, and the ability to acquire iris images within a large volume.

| 2. | Segmentation and localization |

The module following the capture of an acceptable quality iris image is largely known as the segmentation and localization. The goal of this step is to accurately determine the spatial extent of the iris, locate the pupillary and limbic boundaries and identify and mask-out regions within the iris that is affected by noise such as specular reflections, superimposed eyelashes, and other occlusions that may affect the quality of the template [7]. A wide gamut of algorithms have been proposed for the segmentation and localization of iris regions, such as Daugman’s integro-differential operator [2,5], circular Hough transforms along with Canny edge detection [3,6], binary thresholding and morphological transforms [11,12], bit-planes extraction coupled with binary thresholding [13,14], and active contours [15–17].

Segmentation and localization is perhaps the most important step in the process of the biometric template generation once a high quality iris image has been acquired. This is because the performance and accuracy of the subsequent stages is critically dependent on the precision and accuracy of the segmentation stage [15].

| 3. | Unwrapping/ Normalization |

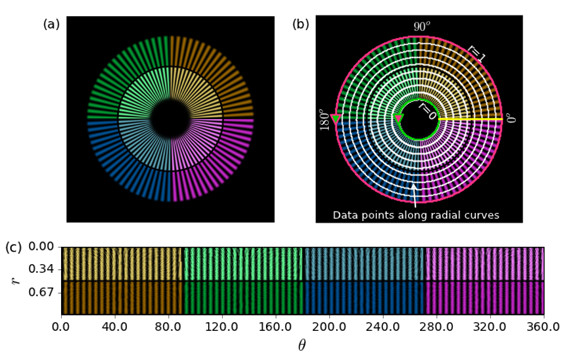

The spatial extent of the iris region varies greatly amidst image captures due to magnification, eye pose, and pupil dilation/expansion [5,7]. Furthermore, the inner and outer boundaries of the iris are not concentric, and they deviate considerably from perfect circles. Before the generation of biometric code the segmented iris is geometrically transformed into a scale and translation invariant space in which the radial distance between the iris boundaries along different directions are normalized between the values of 0 (pupil boundary) and 1 (limbic boundary) [2]. This unwrapping process, shown schematically in Figure 4, consists of two steps: First, a number of data points are selected along multiple radial curves interspaced between the two iris boundaries. Next, these points, which are in the Cartesian coordinates, are transformed into the doubly dimensionless polar coordinate system of two variables and

. As a result, an iris imaged under varying conditions, when compared in the normalized space, will exhibit characteristic features at the same spatial locations.

Figure 4. Schematic of the normalization process using a spoke pattern. (a) A spoke pattern; (b) The green and the magenta circles represents the pupil and iris boundaries, the white markers along the radial curves represent selected points, the yellow line represents theta = 0 deg ; (c) The unwrapped spoke pattern after the normalization process in r — theta coordinates.

| 4. | Encoding – generation of iris code |

The encoding process produces a binary feature vector from an ordered sequence of features extracted from the normalized iris image. A large number of iris recognition systems encode the local phase information following multi-resolution filtering by quantizing the phase at each location using two bits. Commonly used multi-resolution filters include 2-D Gabor filter [2,5,18], log-Gabor filters [3], multi-level Laplacian pyramids [6], PCA and ICA [16], etc. Similar to the segmentation module there are numerous algorithms for iris pattern encoding.

| 5. | Recognition – verification/ authentication of identity |

The recognition of iris for subject verification/authentication requires an additional matching step that involves measuring the similarity of a template generated from a newly acquired iris image to one or many templates stored in a database. The most common technique for comparing two iris codes is the normalized Hamming distance (HD) which is a measure of the percentage of locations in which two binary vectors vary [16]. For example, the HD between two orthogonal vectors is 1 whereas the HD between two identical vectors is 0. While an HD of 0 between two iris images from the same eye is highly improbable in practical scenarios pertaining to noise, an HD of 0.33 or less indicates a match [2].

A detailed explanation of the image processing and pattern recognition techniques employed in the field of iris recognition is beyond the scope of this post. Interested readers are referred to the work of Daugman’s [2,15] and the extensive survey of iris recognition by Bowyer et al [10].

In the next post I will cover some of the desirable properties of iris recognition acquisition systems.

(The figures in the post were generated using a combination of Matplotlib, OpenCV (for segmentation, normalization and encoding of iris in Fig 3) and Powerpoint.)

Links to post in this series

- *Primer on iris recognition

- Desirable properties of iris acquisition systems

- The DOF problem in iris acquisition systems

References

- E. Tabassi, P. Grother, and W. Salamon, “Iris Quality Calibration and Evaluation (IQCE): Performance of Iris Image Quality Assessement and Algorithms,” (2011).

- J. Daugman, “How iris recognition works,” in 2002 International Conference on Image Processing. 2002. Proceedings (2002), Vol. 1, pp. I–33–I–36 vol.1.

- L. Masek, “Recognition of human iris patterns for biometric identification,” Master’s thesis, University of Western Australia (2003).

- A. Muron and J. Pospisil, “The human iris structure and its usages,” Acta Univ Palacki Phisica 39, 87–95 (2000).

- J. G. Daugman, “High confidence visual recognition of persons by a test of statistical independence,” IEEE Trans. Pattern Anal. Mach. Intell. 15, 1148–1161 (1993).

- R. P. Wildes, “Iris recognition: an emerging biometric technology,” Proc. IEEE 85, 1348–1363 (1997).

- A. Ross, “Iris recognition: The path forward,” Computer 43, 30–35 (2010).

- M. Vatsa, R. Singh, and P. Gupta, “Comparison of iris recognition algorithms,” in Intelligent Sensing and Information Processing, 2004. Proceedings of International Conference on (2004), pp. 354–358.

- J. R. Matey and L. R. Kennell, “Iris recognition–beyond one meter,” in Handbook of Remote Biometrics (Springer, 2009), pp. 23–59.

- K. W. Bowyer, K. P. Hollingsworth, and P. J. Flynn, “A Survey of Iris Biometrics Research: 2008–2010,” in Handbook of Iris Recognition, M. J. Burge and K. W. Bowyer, eds., Advances in Computer Vision and Pattern Recognition (Springer London, 2013), pp. 15–54.

- M. M. Khaladkar and S. R. Ganorkar, “Comparative Analysis for Iris Recognition,” Int. J. Eng. Res. Technol. 1, (2012).

- Y. Du, R. Ives, D. M. Etter, and T. Welch, “A new approach to iris pattern recognition,” in European Symposium on Optics and Photonics for Defence and Security (2004), pp. 104–116.

- B. Bonney, R. Ives, D. Etter, and Y. Du, “Iris pattern extraction using bit planes and standard deviations,” in Signals, Systems and Computers, 2004. Conference Record of the Thirty-Eighth Asilomar Conference on (2004), Vol. 1, pp. 582–586.

- B. L. Bonney, Non-Orthogonal Iris Segmentation (2005).

- J. Daugman, “Iris Recognition,” in Handbook of Biometrics, A. K. Jain, P. Flynn, and A. A. Ross, eds. (Springer US, 2008), pp. 71–90.

- L. Birgale and M. Kokare, “Recent Trends in Iris Recognition,” in Pattern Recognition, Machine Intelligence and Biometrics (Springer, 2011), pp. 785–796.

- J. Daugman, “New Methods in Iris Recognition,” IEEE Trans. Syst. Man Cybern. Part B Cybern. 37, 1167–1175 (2007).

- J. R. Matey, O. Naroditsky, K. Hanna, R. Kolczynski, D. J. LoIacono, S. Mangru, M. Tinker, T. M. Zappia, and W. Y. Zhao, “Iris on the Move: Acquisition of Images for Iris Recognition in Less Constrained Environments,” Proc. IEEE 94, 1936–1947 (2006).

Pingback: Desirable properties of iris acquisition systems | Indranil's world

Pingback: The DOF problem in iris acquisition systems | Indranil's world

Sir do you have the Matlab code for this iris recognition?

I used Matplotlib and OpenCV for generating the figures in the blog post. However, if you are looking for Matlab code for iris recognition, then you can just Google for Masek’s code (written in Matlab).

Do you have the feature encoding part?

For python?

Hi Akif,

I should have the Python code that I used for the above post. However, I have not verified that output with any standard iriscode. In other words, I wrote the code purely for the purpose of concept demonstration. Would you still want it? If you are still looking for a standard code, I would again recommend you refer to Masek’s matalb code.

Actually,I really didn t understand encoding part.So if you could send me the code , at least I might have got an idea about it.I am doing something different than masek’s matlab code.

Thank you very much…

akifcelebi@outlook.com

is it possible for two people to have an identical iris! BOTH EYES

Based on what I know, it is very highly improbable.

Can i have your python code?

thank you in advance,

loukramsociety@gmail.com

is the python code still available? I need it for an educational project in college…. may you send it please?

Please see if you can salvage something from https://github.com/indranilsinharoy/phd-artifacts/tree/master/code/proposal/iriscode_gen_using_masek_method . Especially, look into the notebooks Iris_codeGen_Masek-ColorIris.ipynb and Iris_codeGen_Masek-GrayScaleIris.ipynb

Thanks.

May I know how I should specify the minimum and maximum radius parameters to deal with huge dataset?